How Bing SERP Features Improve LLM Accuracy, and Why Developers Should Use Them

Large Language Models often fail for a simple reason, they lack fresh, structured, and contextually rich data. Traditional web search APIs like Bing return lists of links, but modern AI systems require more than that. They need intent, context, and semantic signals.

This is where Bing SERP feature extraction becomes critical, especially when accessed through platforms like Crawleo.

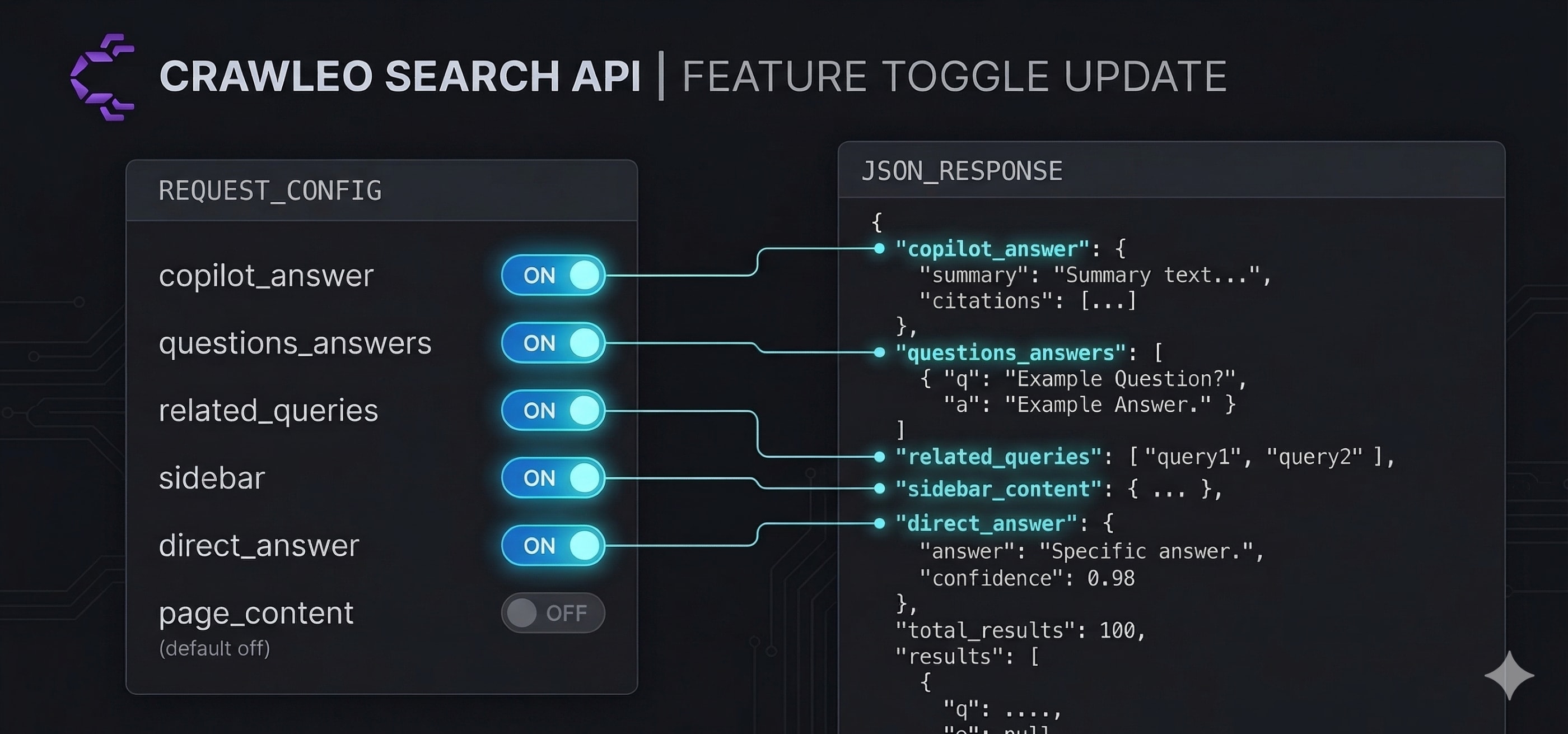

With the new /search toggles, developers can control exactly which SERP components are returned, enabling more precise, efficient, and accurate LLM responses.

This article explains how these features improve accuracy, and why integrating them through Crawleo (Crawleo) is a strong architectural decision for AI systems.

The Problem: Why Raw Search Results Are Not Enough

Standard search APIs typically return:

- Blue links

- Titles

- Snippets

For LLMs, this creates several issues:

- Missing intent signals

- No structured question-answer pairs

- Lack of entity context

- High token cost when crawling everything blindly

This forces developers to build additional layers of parsing, ranking, and summarization.

What Bing SERP Features Add to LLM Pipelines

The updated Bing /search endpoint, available through Crawleo (Crawleo), introduces fine-grained control over high-value SERP components.

The updated Bing /search endpoint introduces fine-grained control over high-value SERP components.

These include:

1. Copilot Answers

- AI-generated summaries directly from Bing

- Provide high-level synthesized knowledge

Impact on LLMs:

- Reduces hallucination by grounding responses

- Acts as a fast first-pass summary

2. People Also Ask (questions_answers)

- Real user questions related to the query

- Structured as question-answer pairs

Impact on LLMs:

- Improves query expansion

- Helps agents anticipate follow-up questions

- Enhances conversational accuracy

3. Related Queries

- Alternative search formulations

- Closely related intents

Impact on LLMs:

- Enables better retrieval breadth

- Improves semantic coverage in RAG

4. Sidebar (Knowledge Panel)

- Entity-based structured data

- Includes facts, relationships, and metadata

Impact on LLMs:

- Provides authoritative entity grounding

- Reduces factual errors

5. Direct Answers

- Immediate factual responses

- Extracted from high-confidence sources

Impact on LLMs:

- Improves precision for factual queries

- Reduces unnecessary crawling

Why These Features Increase LLM Accuracy

1. Structured Context Instead of Raw Text

LLMs perform better when given structured signals instead of unprocessed HTML.

SERP features provide:

- Pre-ranked relevance

- Semantic grouping

- Intent classification

This reduces ambiguity during generation.

2. Reduced Hallucination Risk

By injecting:

- Copilot summaries

- Direct answers

- Knowledge panels

The model is anchored to verified information.

3. Better Retrieval Augmentation

Instead of retrieving 10 links and hoping for relevance, developers can:

- Use People Also Ask for query expansion

- Use related queries for coverage

- Use direct answers for precision

This creates a multi-layer retrieval strategy.

4. Lower Token Usage

Since page content is now opt-in, developers can:

- Avoid unnecessary crawling

- Only fetch content when needed

This significantly reduces token consumption in LLM pipelines.

Controlling SERP Extraction with /search Toggles

Using Crawleo (Crawleo), developers can directly control Bing SERP extraction behavior without building custom scraping infrastructure.

The Bing API now allows explicit control over what data is returned.

Example: Minimal Search Response

GET /search?query=python+programming&copilot_answer=false&questions_answers=false&related_queries=false&sidebar=false&direct_answer=false

Use this when:

- You only need links

- You plan to handle everything downstream

Example: Structured + Content Retrieval

GET /search?query=python+programming&markdown=true

Use this when:

- You want RAG-ready content

- You need clean markdown for embeddings

Key Insight

Page content is now opt-in, which makes responses:

- Smaller

- Faster

- Cheaper

How Crawleo Makes This Even More Powerful

Crawleo (Crawleo) builds on top of Bing search and adds critical capabilities for AI systems.

Crawleo builds on top of Bing search and adds critical capabilities for AI systems.

According to the Crawleo project context fileciteturn0file0:

- It provides real-time search and crawling in one API

- Supports multiple output formats including Markdown and clean HTML

- Is optimized for LLM pipelines and RAG systems

- Enforces strict zero data retention

Key Advantages in This Context

You can explore these capabilities directly via:

- Website: Crawleo

- MCP endpoint: Crawleo MCP endpoint

- RapidAPI listing: Crawleo on RapidAPI

1. Unified Search + Crawl Pipeline

Instead of:

- Calling Bing

- Then crawling manually

Crawleo allows:

- Search

- Extract SERP features

- Crawl results

In a single request.

2. LLM-Optimized Outputs

Crawleo returns:

- Clean HTML

- Plain text

- Markdown

This reduces preprocessing overhead significantly.

3. Cost Efficiency

With page content now optional, combining:

- SERP feature toggles

- Selective crawling

Leads to major cost savings in production systems.

4. Privacy-First Architecture

Crawleo does not store:

- Queries

- Crawled content

This is critical for enterprise AI deployments.

Best Practices for Using Bing SERP Features in LLM Systems

When using Crawleo (Crawleo), these practices become easier to implement due to built-in search and crawling integration.

1. Start With SERP Features Before Crawling

Use:

- direct_answer

- copilot_answer

Only crawl if additional depth is required.

2. Use People Also Ask for Query Expansion

Feed these into your retriever to improve recall.

3. Combine Sidebar Data With RAG

Use knowledge panel data as:

- grounding context

- metadata layer

4. Enable Markdown Only When Needed

Avoid unnecessary token usage by keeping page content disabled by default.

5. Build Multi-Stage Retrieval

- SERP features for intent

- Selective crawling for depth

- LLM synthesis

When You Should Definitely Use These Features

If you are building on top of Crawleo (Crawleo), enabling these features becomes a default best practice for accuracy.

You should enable Bing SERP feature extraction if you are building:

- AI search engines

- RAG pipelines

- Autonomous agents

- Research assistants

- SEO intelligence tools

These systems benefit directly from structured search signals.

Limitations to Be Aware Of

- SERP features depend on query type and availability

- Copilot answers may vary in depth

- Not all queries return knowledge panels

Developers should design fallback strategies.

Conclusion

Bing SERP features are not just enhancements, they are critical signals that transform how LLMs retrieve and reason over web data.

With the new /search toggles, developers gain precise control over:

- Data structure

- Payload size

- Retrieval strategy

When combined with Crawleo (Crawleo) and its unified search and crawling infrastructure, this creates a highly efficient, accurate, and scalable foundation for modern AI systems.

The result is simple, better answers, lower cost, and more reliable AI.

To get started:

- Visit Crawleo

- Explore MCP integration: Crawleo MCP endpoint

- Try the API: Crawleo on RapidAPI

External Links and References

Here are useful links developers can use when implementing the concepts in this article:

- Crawleo official website: Crawleo

- Crawleo MCP endpoint: Crawleo MCP endpoint

- Crawleo RapidAPI listing: Crawleo on RapidAPI

- Model Context Protocol docs: Model Context Protocol documentation

Suggested Documentation Links (to create or link internally)

- Crawleo Search API Guide

- Crawleo Crawler API Guide

- How to Build RAG Pipelines with Crawleo

- Crawleo vs SerpAPI Comparison

- Optimizing Token Usage in LLM Applications

Example: Full Crawleo + Bing SERP Strategy

Below is a practical example of how to combine SERP features with Crawleo in a production workflow:

GET /search?query=llm+accuracy&copilot_answer=true&questions_answers=true&related_queries=true&sidebar=true&direct_answer=true

Then:

- Use

direct_answerfor immediate response - Use

questions_answersfor query expansion - Use

related_queriesfor broader retrieval - Select top URLs and pass them to crawler

- Use markdown output for embedding

This creates a high-accuracy, low-cost RAG pipeline.

Final Note

If you are not using SERP features, you are leaving accuracy on the table.

Modern LLM systems are not just about retrieval, they are about structured retrieval.

Bing SERP features, combined with Crawleo, give you that structure.