How to Connect Crawleo to LM Studio via MCP

If you're running local models in LM Studio, you're already ahead of the curve. But out of the box, your models are isolated from the live web—they can't search, crawl, or look anything up in real time. That's where Crawleo + MCP changes the game.

By connecting Crawleo's MCP (Model Context Protocol) server to LM Studio, your local AI gets access to powerful real-time tools without writing a single line of code.

What is MCP?

Model Context Protocol (MCP) is an open standard that lets AI assistants call external tools securely. Instead of building custom integrations, you simply point your AI client to an MCP server and it handles the rest.

Crawleo provides a hosted MCP server that gives your models access to:

- search_web — AI-powered web search in real time

- crawl_web — Extract clean, LLM-optimized Markdown from any webpage

- google_search — Direct Google Search results

- google_maps — Location and business data via Google Maps

- headful_browser — Full browser rendering for JavaScript-heavy pages

Prerequisites

- LM Studio installed on your machine

- A Crawleo API key — get one at crawleo.dev

Step 1: Open the Developer Window

- Launch LM Studio

- Click the "Developer" tab in the top-left navigation

Step 2: Edit the MCP Configuration

- Inside the Developer window, click the editor tab at the top

- Select the

mcp.jsonoption - Add the Crawleo MCP server to your configuration:

{

"crawleo": {

"url": "https://api.crawleo.dev/mcp",

"headers": {

"Authorization": "Bearer YOUR_API_KEY"

}

}

}

🔐 Replace YOUR_API_KEY with your actual Crawleo API key from your dashboard.

Step 3: Verify the Integration

- Switch to the "Chat" window

- Open the right sidebar

- Navigate to the "Integrations" tab

You should see mcp/crawleo listed. Expanding it reveals all available tools:

search_webcrawl_webgoogle_searchgoogle_mapsheadful_browser

✅ If you see these, Crawleo is fully connected and ready to use.

What You Can Do Now

With Crawleo MCP active, your local LM Studio models can:

- Search the live web and return up-to-date results

- Crawl any URL and extract clean Markdown for analysis or RAG pipelines

- Pull Google Search results directly into your chat context

- Look up location data and business info via Google Maps

- Render and extract content from JavaScript-heavy websites

This is a game-changer for developers building research tools, AI agents, or any workflow that needs current information.

Related Posts

When to Use JavaScript Rendering (And When You Don’t Need It)

Not sure whether a page needs JavaScript rendering to crawl correctly? This guide shows when to use `render_js=true`, when standard HTTP crawling is enough, and how to use a cost-efficient fallback strategy with Crawleo.

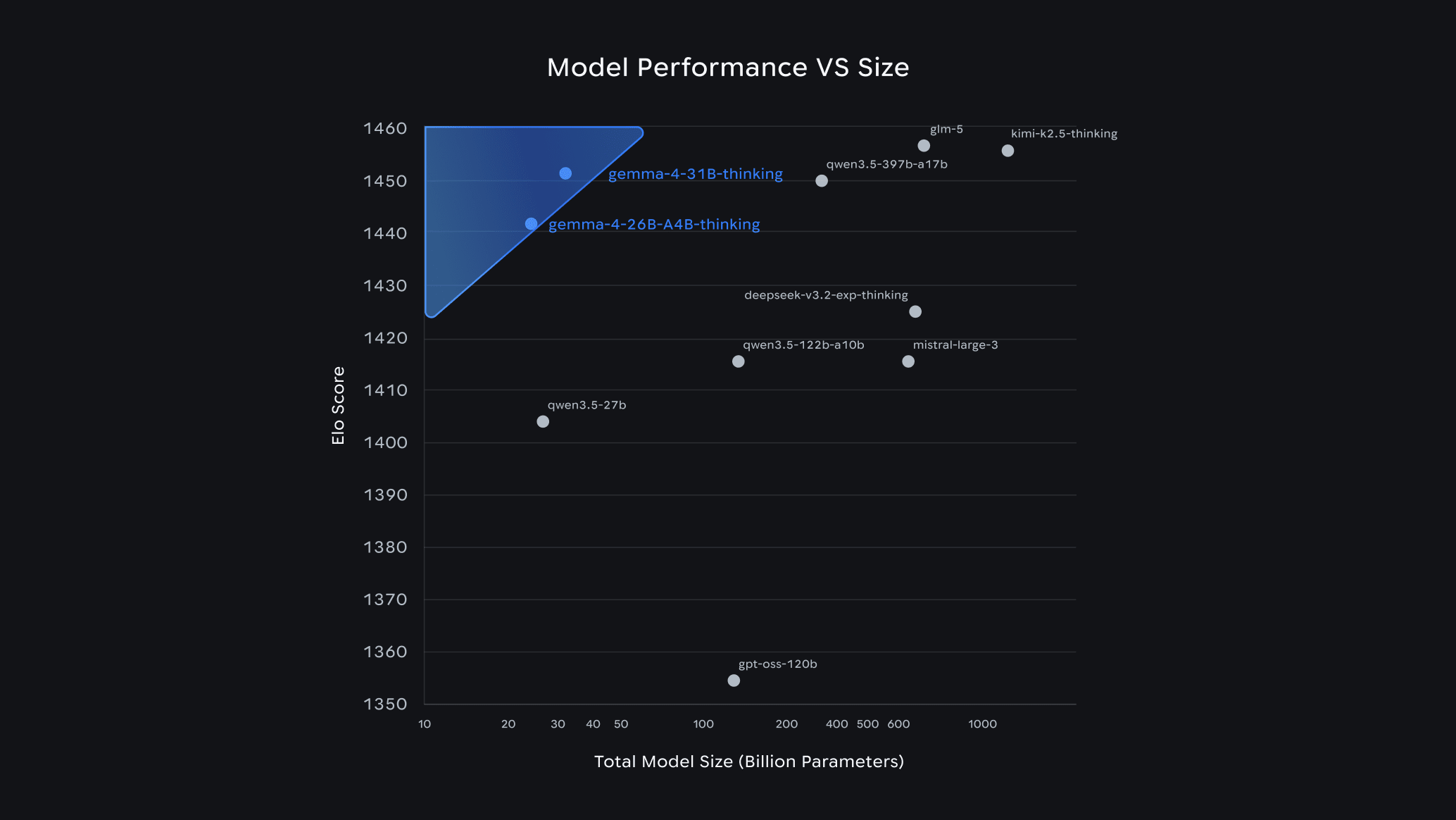

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.