When to Use JavaScript Rendering (And When You Don’t Need It)

Modern websites are built in different ways. Some pages ship complete content in the initial HTML (easy to crawl). Others rely on JavaScript to fetch and paint content in the browser (harder to crawl without rendering).

Crawleo supports both approaches:

- Standard HTTP crawl (

render_js=false): faster and cheaper for most pages. - Browser rendering (

render_js=true): more powerful for JavaScript-heavy pages, but costs more credits.

This guide helps you choose the right mode confidently, without guessing.

What “JavaScript rendering” means (in crawling terms)

When you enable JavaScript rendering, Crawleo uses a browser-like environment to load the page, execute scripts, and then extract content after the page has rendered.

Use it when the page’s real content appears only after JavaScript runs.

The default: start without rendering

In most cases, you should start with:

render_js=false(the default in many scraping setups)- Request Markdown (

markdown=true) for clean, structured content

Why:

- Lower cost: HTTP crawl is 1 credit/URL (per Crawleo docs)

- Faster: no browser to spin up

- More predictable: fewer moving parts

A simple request looks like:

curl -X GET "https://api.crawleo.dev/crawl?urls=https://example.com&markdown=true" \

-H "x-api-key: YOUR_API_KEY"

When you should turn on render_js=true

Enable JavaScript rendering when the page is client-rendered or content is injected dynamically.

Strong signals you need JS rendering

- The page looks “empty” in a normal crawl: you get navigation, headers, footers—but not the main article/product data.

- Content is loaded after a spinner/skeleton UI (common in SPA frameworks).

- Pagination, filters, or tabs change content dynamically (and the server HTML doesn’t include the data).

- The site requires JavaScript to populate text (not just styling).

Try:

curl -X GET "https://api.crawleo.dev/crawl?urls=https://example.com&render_js=true&markdown=true" \

-H "x-api-key: YOUR_API_KEY"

Credit/cost note (important)

Per the Crawleo docs:

- HTTP crawl (

render_js=false): 1 credit per URL - JS rendering (

render_js=true): 10 credits per URL - Failed requests: 0 credits

So treat JS rendering as a “power tool”: use it when it’s needed, not by default.

When you probably don’t need JS rendering

Many high-value pages still work perfectly without browser rendering.

Common “no rendering needed” cases

- Documentation sites where the content is in the HTML (many doc platforms serve server-rendered pages).

- Blog articles/news pages that are mostly static.

- Landing pages where the copy is in the initial source.

- Simple product pages where the description/specs are server-rendered.

Even if a site uses JavaScript, you may not need rendering if the content you care about is already present in the initial HTML.

A practical decision checklist

Use this quick checklist for each new domain.

Choose render_js=false when:

- You primarily need text content and it appears in the first response.

- You want speed and cost efficiency at scale.

- You’re crawling many URLs and most are similar static templates.

Choose render_js=true when:

- The main content is missing or incomplete without rendering.

- The site is heavily app-like (SPA) and populates content after load.

- You need screenshots (screenshots are only available when

render_js=trueper Crawleo docs).

Recommended workflow: fallback to rendering only when needed

A safe, scalable approach is:

- Crawl using HTTP mode (

render_js=false) - Inspect the result:

- If

statusis OK but content fields are sparse/empty, retry usingrender_js=true

- If

This “fallback” strategy usually delivers the best balance of:

- Cost control

- Reliability

- Coverage across site types

Don’t overuse JS rendering: common pitfalls

1) Higher cost without better results

If the content is already in HTML, rendering may not improve extraction—just increase credits used.

2) Rendering can change what you see

Some sites personalize content, show region-based banners, or load extra UI components. If you need consistent output, keep your settings stable and consider:

- Geolocation (country-level) when differences matter (

geolocation=us,gb, etc.)

3) Screenshots are not a universal feature

Crawleo screenshots are only available when:

render_js=truescreenshot=true(and optionallyscreenshot_full_page=true)

If you call screenshot=true without JS rendering, it will be ignored (per docs).

Example:

curl -X GET "https://api.crawleo.dev/crawl?urls=https://example.com&render_js=true&screenshot=true&screenshot_full_page=true" \

-H "x-api-key: YOUR_API_KEY"

Related Posts

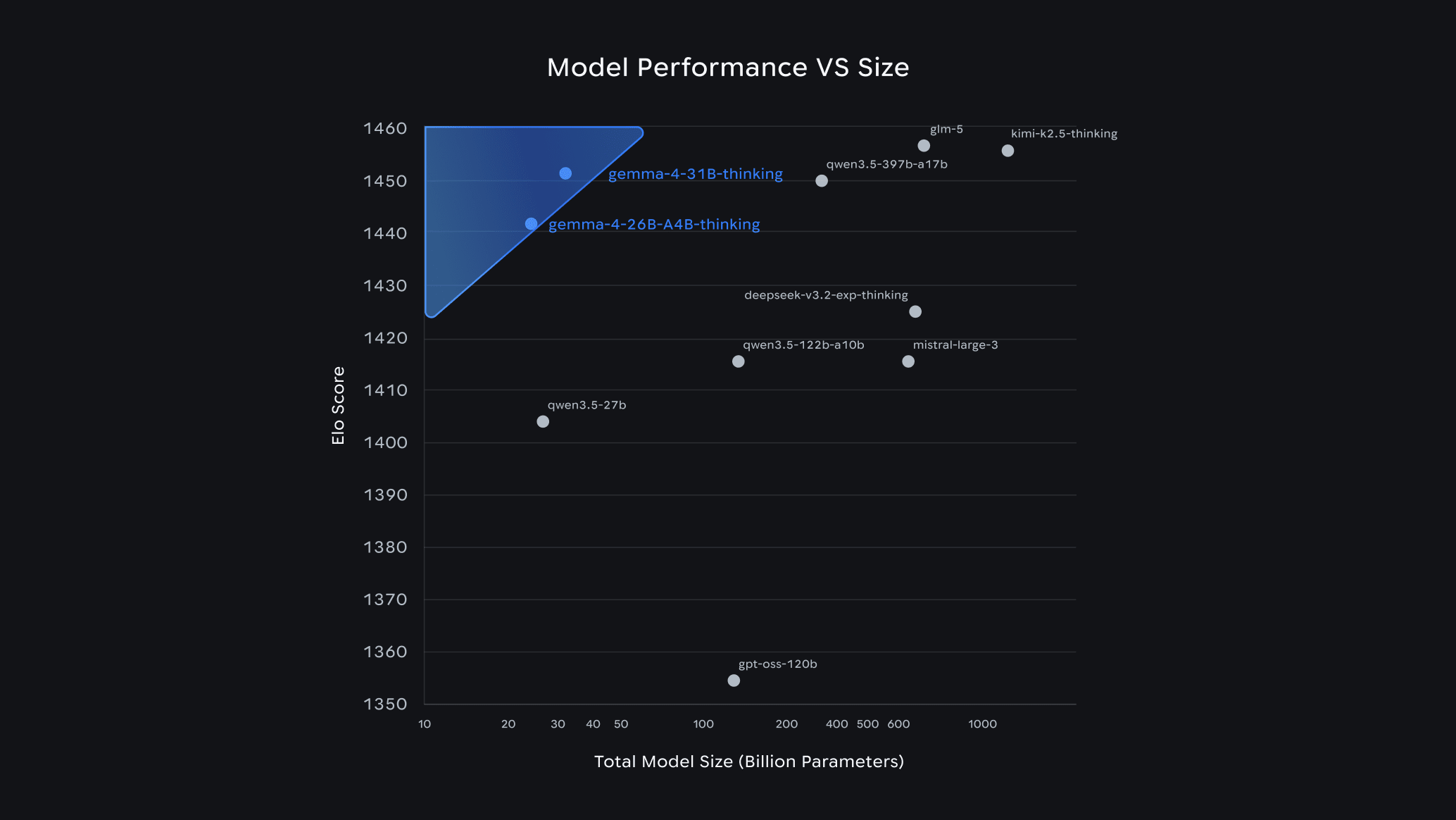

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.

LangChain v0.3 Tutorial & Migration Guide for 2026

Learn what’s new in LangChain v0.3 and how to migrate: Runnables, new agents, tools, middleware, MCP, and testing patterns for modern AI agents in Python.