LangChain v0.3 Tutorial & Migration Guide for 2026

LangChain v0.3 is more than a version bump—it refactors integrations, modernizes the agent stack, and introduces new utilities for chat models and tools. In this tutorial, you will see what changed, how to migrate existing chains and agents, and how to use the new patterns to build robust AI workflows on top of crawled or internal data.

1. What’s new in LangChain v0.3?

At a high level, v0.3 focuses on packaging cleanup, better model utilities, and a smoother developer experience for agents. Many changes are internal, but they directly affect how you install integrations, define tools, and manage callbacks.

Key highlights:

- More integrations moved out of

langchain-communityinto dedicatedlangchain-{name}packages (for example,langchain-openai,langchain-anthropic), improving dependency isolation and versioning. - Legacy implementations in

langchain-communityremain for now but are marked as deprecated and will be removed over time. - Revamped documentation and API references, especially around integration setup and model configuration.

- Simplified tool definition and usage, with clearer decorators and runtime behavior for tools called from agents.

- New utilities for chat models (unified constructors, message trimming/merging helpers, rate limiting, custom events) to make long‑running or high‑traffic agents easier to manage.

- Callbacks are now backgrounded and non‑blocking by default, which is important if you rely on tracing or logging in serverless environments.

These changes are designed to work hand in hand with LangGraph, which handles low‑level orchestration while LangChain gives you higher‑level agent building blocks.

2. Breaking changes that will hit your code

If you are upgrading from pre‑0.3 versions, some behavior and APIs have changed significantly. The most common breaking points you will see reported by developers are around chains, agents, and imports.

The big ones to watch:

LLMChainis effectively deprecated in favor of the newer Runnables API (such asRunnableSequenceand related building blocks).- The

.run()method is replaced by.invoke()for executing chains and agents, so older examples calling.run(prompt)will need to be updated. initialize_agent()has been removed; you should now use factory helpers likecreate_react_agentor other agent constructors instead.- Memory components such as

ConversationBufferMemoryhave moved to more granular modules, which leads to frequent import‑path errors after upgrade. - Pydantic v2 changes cause some older models and output parsers to fail, requiring either updates or explicit rebuilds of custom parsers.

Because of these changes, treating v0.3 as a “mini‑rewrite” rather than a drop‑in upgrade will save you time.

3. Step‑by‑step migration guide to v0.3

Before touching code, you should plan the upgrade and apply it incrementally across services or micro‑repos. A deliberate migration strategy minimizes downtime for anything in production (for example, an AI‑powered crawler summarization pipeline).

3.1. Prepare and upgrade dependencies

- Pin and document your current working version before upgrading so you can roll back if needed.

- Upgrade LangChain to 0.3.x and ensure

langchain-coreand any integration packages (langchain-openai,langchain-anthropic, etc.) are installed explicitly. - Remove any unused or implicit

langchain-communitydependencies if the corresponding dedicated packages exist.

Some community guidance also recommends using the langchain-cli migrate tool to automatically fix deprecated imports and patterns in bulk.

bash

# Example: upgrading LangChain and adding core integrations pip install -U "langchain==0.3.*" langchain-core langchain-openai # Optional helper to update imports in bulk pip install -U langchain-cli langchain-cli migrate path/to/your/project

This kind of automated pass will not fix every issue, but it catches many import and naming changes in one sweep.

3.2. Move from LLMChain + .run() to Runnables + .invoke()

Existing code usually looks something like:

python

# Old-style (pre-0.3) chain = LLMChain(llm=model, prompt=prompt) result = chain.run({"question": "What is Crawleo?"})

In v0.3, the recommended approach is to define a Runnable pipeline and call .invoke():

python

from langchain_core.runnables import RunnableSequence pipeline = RunnableSequence(prompt | model) result = pipeline.invoke({"question": "What is Crawleo?"})

This pattern works consistently across synchronous and asynchronous calls, and it composes cleanly with tools and retrievers.

3.3. Replace initialize_agent() with modern agent helpers

Older tutorials and gists often use initialize_agent to build ReAct‑style agents with tools. In v0.3, you should adopt helpers like create_react_agent (paired with AgentExecutor or similar wrappers) or the new high‑level create_agent abstractions.

python

from langchain_openai import ChatOpenAI from langchain.tools import tool from langchain.agents import create_react_agent, AgentExecutor @tool def lookup_url(url: str) -> str: """Fetch and summarize a URL previously crawled by your system.""" ... llm = ChatOpenAI(model="gpt-4o") agent = create_react_agent(llm, [lookup_url]) executor = AgentExecutor(agent=agent, tools=[lookup_url]) response = executor.invoke({"input": "Summarize yesterday's crawl results."})

This gives you a modern agent setup that works cleanly with v0.3’s middleware and tracing capabilities.

3.4. Fix imports, memory, and Pydantic issues

After you adopt Runnables and the new agent helpers, you will often still see errors around imports or custom Pydantic models. Common fixes include:

- Updating imports for memory, retrievers, and vector stores to their new modules or integration packages.

- Calling

model_rebuild()on custom output parsers or Pydantic models that predate v2 compatibility. - Running your full test suite (or at least key agent flows) and adjusting any code that depended on undocumented behavior.

A systematic “update → run tests → fix errors → repeat” loop is the safest way to complete migration for multi‑service systems.

4. Updated agent patterns and building blocks

Once your project runs on v0.3, you can start taking advantage of the newer agent patterns surfaced in recent tutorials. LangChain now emphasizes clean separation between models, tools, middleware, and orchestration (with LangGraph handling the lowest‑level graph logic when needed).

4.1. Static vs dynamic models

LangChain’s model layer distinguishes between static agents (fixed model configuration for the whole run) and dynamic agents (switching models at runtime based on rules or middleware). A simple static setup might initialize a single chat model once and then call .invoke() on it for each request.

Dynamic models become powerful when combined with middleware such as ModelFallbackMiddleware, which can automatically fall back to a cheaper or backup provider when the primary model fails. For example, you might route most Crawleo summarization traffic to a fast model, but fail over to a more capable one when the prompt exceeds some complexity threshold.

4.2. Tools via decorators

Tools remain a core concept—an agent is only useful if it can call external systems like crawlers, vector stores, or databases. In modern LangChain, you typically define tools using decorators so that type hints and docstrings become part of the agent’s schema.

python

from langchain.tools import tool @tool def search_crawleo_index(query: str, limit: int = 5) -> str: """Search the Crawleo index and return the top matching URLs.""" # call your internal search API here ...

This approach makes tool behavior explicit, easier to document, and easier to test in isolation.

4.3. Middleware for logic and observability

Middleware is now a first‑class way to inject logic before or after agent steps, including logging, model selection, and guardrails. You can, for example, attach middleware that logs every tool call, enforces rate limits, or modifies prompts per request.

Built‑in middleware includes helpers for fallback models, tool selection assistance, and structured response handling, and you can add your own for custom policies. This is particularly useful in production environments where you must trace why an agent chose a certain action or ensure it respects content rules.

5. Real‑world patterns: chatbots, RAG, and content generation

The updated APIs still support the classic use cases—chatbots, document QA, and content generation—but with cleaner patterns. The JetBrains tutorial demonstrates these scenarios with concise examples that map well onto v0.3 best practices.

5.1. Chatbots and support assistants

AI‑powered chatbots remain a canonical LangChain use case, often backed by a single chat model plus a handful of tools (search, database lookup, task execution). In v0.3, you can build them using Runnables and agents while layering middleware for analytics and guardrails.

For instance, a support bot for your infrastructure might use tools to look up documentation, query incident reports, and fetch monitoring dashboards, with middleware enforcing that sensitive information is redacted.

5.2. Document question‑answering over crawled data

Document QA is a natural fit for Crawleo‑style workflows: crawl the web or internal sites, index content in a vector store, then let an agent answer questions on top. The JetBrains example uses a FAISS index of PyCharm documentation and a custom tool to retrieve relevant passages before the agent answers.

Your own stack might instead index HTML snapshots from Crawleo and expose a tool like search_crawleo_docs that retrieves snippets for the agent to ground its answers. This keeps responses accurate and auditable while benefiting from LangChain’s higher‑level orchestration.

5.3. Content generation from external sources

Another powerful pattern is generating newsletters, blog posts, or release notes from external sources like changelogs or documentation. The JetBrains tutorial shows an agent using a tool that fetches “What’s New in Python” pages and then turns them into a polished newsletter with defined structure and tone.

You can reuse this pattern to generate reports from freshly crawled sites: one tool fetches or aggregates crawl results, another agent turns them into marketing copy, analytics summaries, or internal briefs.

6. Advanced features: MCP, guardrails, testing, and IDE support

For production agents, v0.3 and the surrounding ecosystem also emphasize interoperability, safety, and testability. These are the features that matter once your prototype becomes a critical service.

- MCP adapter: The Model Context Protocol adapter lets your LangChain agent connect to external MCP servers (for example, a Postman MCP server) and treat them as tools, expanding what your agent can do without hard‑coding every integration.

- Guardrails via middleware: Guardrails can be implemented as middleware that inspects inputs and outputs, blocks disallowed content (such as specific keywords), or forces human‑in‑the‑loop approval for risky actions.

- Testing tools: LangChain’s

GenericFakeChatModelmakes it easy to write unit tests that simulate LLM responses, while higher‑level evaluators like AgentEvals support trajectory‑based or LLM‑judge integration tests. - PyCharm integration: The PyCharm AI Agents Debugger plugin lets you visually inspect LangChain agents, including traces and LangGraph views, so you can debug complex workflows without scattering print statements.

For teams building crawlers, summarizers, or search‑augmented agents, combining these pieces with LangChain v0.3’s newer APIs gives a much more maintainable stack than the earlier “monolithic chain” style.

Related Posts

How to Connect Crawleo to LM Studio via MCP

Learn how to connect Crawleo to LM Studio using MCP (Model Context Protocol) and give your local AI models real-time web access. In just a few steps, unlock tools like web search, crawling, Google Search, Maps, and more—no SDKs required.

When to Use JavaScript Rendering (And When You Don’t Need It)

Not sure whether a page needs JavaScript rendering to crawl correctly? This guide shows when to use `render_js=true`, when standard HTTP crawling is enough, and how to use a cost-efficient fallback strategy with Crawleo.

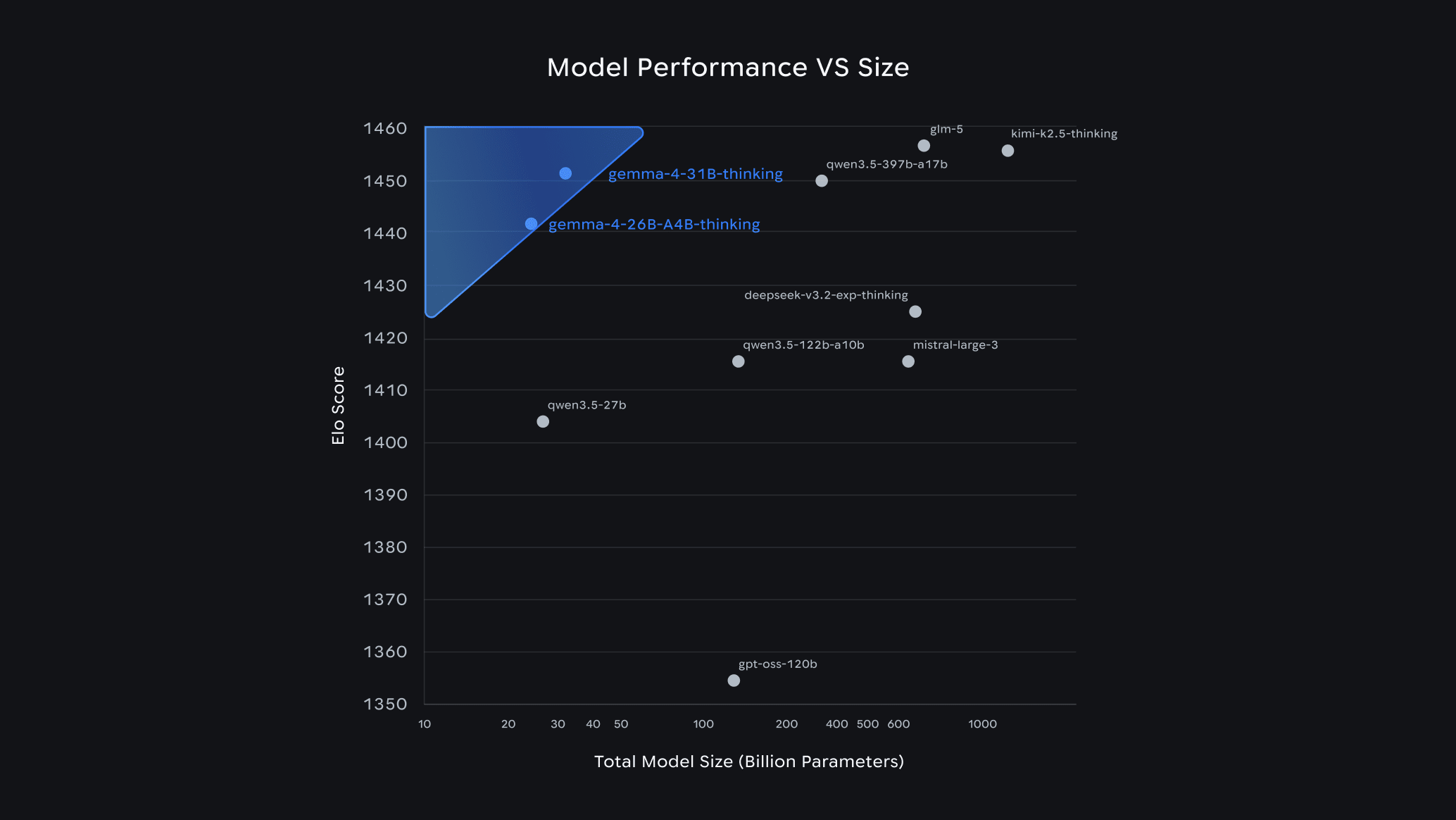

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.