Best SearchApi Alternatives (2026) – Crawleo vs. SearchApi for AI & Data Extraction

Crawleo vs. SearchApi: Which Web Data API Fits Your Needs?

In the world of automated data collection, "accessing the web" can mean two very different things: scraping search engine result pages (SERPs) to track rankings, or visiting websites to read and extract their actual content.

This distinction is the core difference between Crawleo and SearchApi. While both connect your code to the internet, they serve fundamentally different architectural roles.

At a Glance: The Comparison

| Feature | SearchApi | Crawleo |

|---|---|---|

| Primary Goal | SERP Analysis & Monitoring | AI-Native Search & Content Retrieval |

| Data Depth | Surface level (Titles, Snippets, URLs) | Deep level (Full page content, JS Rendering) |

| Core Output | Structured JSON | LLM-Ready Markdown / Clean HTML |

| Ideal For | SEO Tools & Market Research | AI Agents, RAG Pipelines & LLMs |

| Integration | REST API | REST API + Native MCP Support |

What is SearchApi?

SearchApi is a dedicated SERP (Search Engine Results Page) scraper. Its primary goal is to return the exact list of results you would see on Google, Bing, YouTube, or Amazon.

It excels at bypassing CAPTCHAs and managing complex proxy networks to provide a structured "snapshot" of a search engine.

- Focus: SERP Scraping (Google Search, Shopping, YouTube, etc.).

- Mechanism: You send a query (e.g.,

"iPhone 16 price"), and it returns the list of links, ad data, and snippets found on the search engine. - Output: Rich JSON objects focused on rankings and metadata.

What is Crawleo?

Crawleo is a "Search & Crawl" API built specifically for the age of Generative AI. It doesn't just show you the search results; it acts as a bridge between the live web and Large Language Models (LLMs).

- Focus: AI-Native Retrieval & Deep Crawling.

- Mechanism: It searches the web, visits the target pages, executes JavaScript to handle dynamic content, and extracts the core information into a "noise-free" format.

- Privacy: Features a strict zero-data retention policy, ensuring sensitive AI queries remain private.

- Output: High-density Markdown or Text, optimized for token efficiency.

Key Differences: The Deep Dive

1. The "Depth" of Data

SearchApi stops at the door. It provides the "address book"—the search result page. It tells you what pages exist, their titles, and a short snippet. If you want to read the full text of an article found in those results, you would need to build or integrate a separate scraping service.

Crawleo enters the building. It provides search results and offers deep crawling in a single step. It can visit the URLs, handle the heavy lifting of rendering modern websites, and return the full article text. This eliminates the need for secondary "read" APIs in your workflow.

2. Output Optimization

- SearchApi (JSON for Developers): Returns nested JSON objects perfectly structured for programmatic analysis of rankings.

{ "organic_results": [ { "position": 1, "title": "Example Site", "link": "https://example.com" } ] } - Crawleo (Markdown for AI): Returns content specifically formatted for LLMs. By providing clean Markdown, Crawleo reduces "token bloat" (unnecessary HTML/boilerplate), saving costs and improving the accuracy of AI summaries.

# The Actual Article Title Here is the full content of the article, cleaned of ads and navigation menus...

3. The Integration Ecosystem

SearchApi follows the standard REST API model, making it a reliable choice for traditional backend services.

Crawleo expands on this by offering native Model Context Protocol (MCP) support. This allows AI assistants—such as Claude Desktop, Cursor, or Windsurf—to "use" Crawleo as a direct tool. It enables AI agents to search and browse the web in real-time to answer complex questions without the developer needing to write custom integration code.

Summary: Which One Should You Choose?

Choose SearchApi if:

- You are building SEO tools (rank trackers, keyword analyzers).

- You need to monitor Google Shopping prices or YouTube rankings.

- You only need metadata (titles and URLs) rather than the full body text of the pages.

- You require access to specific vertical engines like Google Images or Amazon Search.

Choose Crawleo if:

- You are building an AI Agent, Chatbot, or RAG pipeline.

- You need to feed live web knowledge into an LLM with minimal preprocessing.

- You want Markdown output to optimize for context window limits and token costs.

- You need a single API that handles both finding pages (search) and reading them (crawling)

- Privacy and zero data retention are requirements for your application.

Conclusion

If your goal is to analyze search engine rankings and market trends, SearchApi is the robust industry standard. However, if your goal is to empower an AI with the ability to understand and process the live web, Crawleo provides the specialized, "AI-ready" infrastructure required for modern generative workflows.

Related Posts

How to Connect Crawleo to LM Studio via MCP

Learn how to connect Crawleo to LM Studio using MCP (Model Context Protocol) and give your local AI models real-time web access. In just a few steps, unlock tools like web search, crawling, Google Search, Maps, and more—no SDKs required.

When to Use JavaScript Rendering (And When You Don’t Need It)

Not sure whether a page needs JavaScript rendering to crawl correctly? This guide shows when to use `render_js=true`, when standard HTTP crawling is enough, and how to use a cost-efficient fallback strategy with Crawleo.

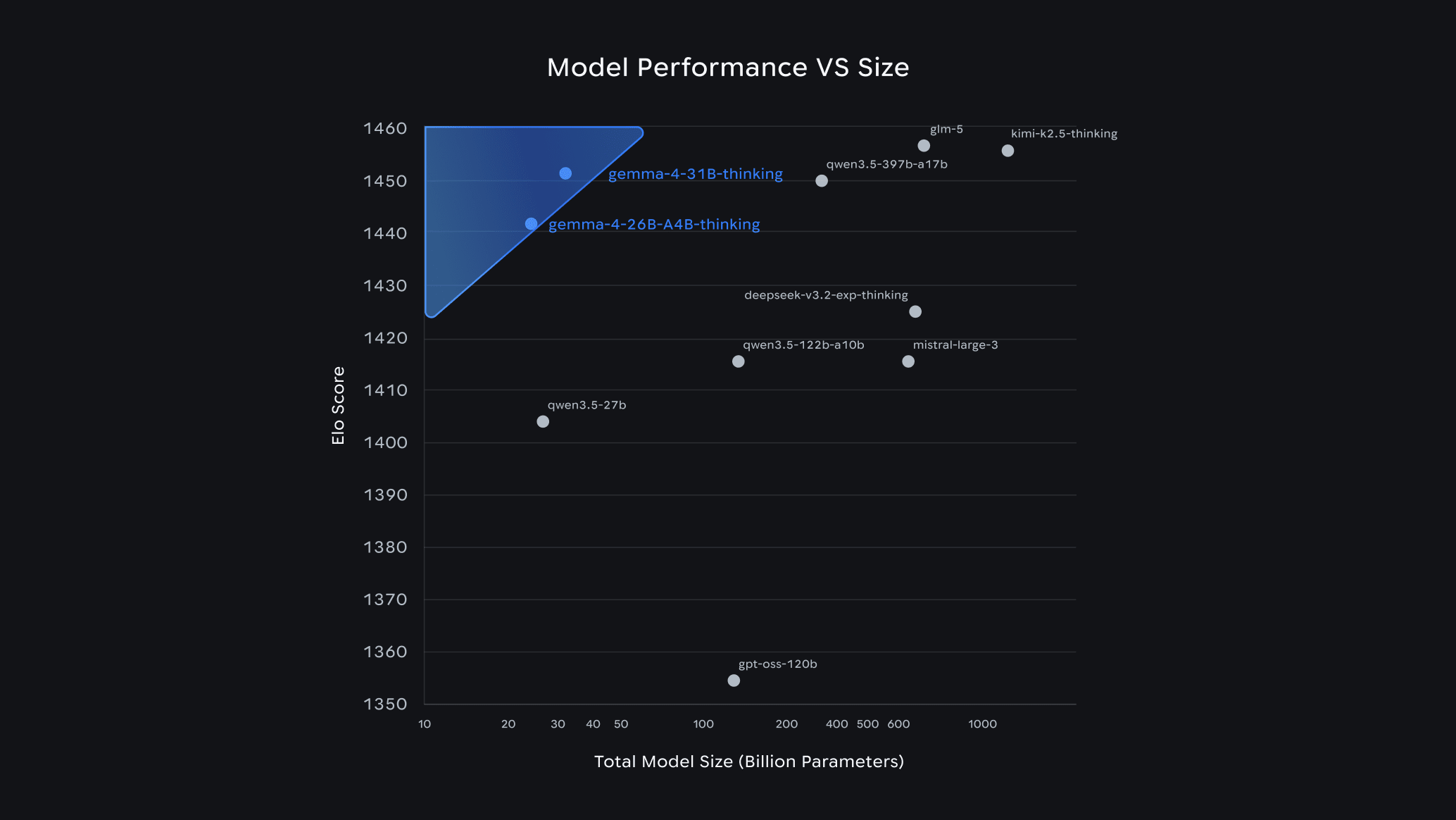

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.