How to Add a Custom MCP Server to IntelliJ ai assistant for Live Web Search

Step 1: Open MCP Settings

Navigate to your assistant settings using the following path:

Settings > Tools > AI Assistant > Model Context Protocol (MCP)

This section manages all MCP servers connected to your assistant.

Step 2: Add a New MCP Server

In the MCP window:

- Click the Add icon in the top-left corner.

- Paste the following configuration, replacing

YourAPIKeywith your actual API key.

{

"mcpServers": {

"crawleo": {

"url": "https://api.crawleo.dev/mcp",

"transport": "http",

"headers": {

"Authorization": "Bearer sk_fcd04ad5_cc17908db909bc6df0c40bdf1dfdf9a4959b06da6795a17e7c58b06626872c24"

}

}

}

}

- Click OK to save the configuration.

Step 3: Apply and Start the MCP Server

After saving, you will see the server listed with a Not Started state.

To activate it:

- Click Apply in the settings window.

- Wait for the assistant to reload the configuration.

Once reloaded, the server state should change to Started, confirming that the MCP server is active and ready to use.

Step 4: Use MCP Tools in Chat

With the MCP server running, you can now call its tools directly from the chat interface.

Available commands include:

/crawl_webfor crawling specific URLs/search_webfor live web search queries

These commands allow your AI assistant to retrieve real-time web content instead of relying on static or cached knowledge.

Common Troubleshooting Tips

- If the server remains in Not Started, double-check your API key and click Apply again.

- Ensure your network allows outbound HTTPS connections.

- Restart your AI assistant or IDE if the state does not update.

Why Use MCP for Web Search

Using MCP servers provides several benefits:

- Standardized tool integration across AI platforms

- Secure API-based access with scoped permissions

- Live, up-to-date web data for AI workflows

- No custom plugin or extension code required

This makes MCP ideal for developers building AI agents, RAG pipelines, or automation workflows that depend on fresh web information.

Final Notes

Once configured, MCP servers run seamlessly in the background and extend your AI assistant with powerful external capabilities. You can add multiple MCP servers to combine different tools and services in a single chat interface.

For advanced use cases, consider chaining MCP tools inside automated agent workflows or IDE-based assistants.

Related Posts

How to Connect Crawleo to LM Studio via MCP

Learn how to connect Crawleo to LM Studio using MCP (Model Context Protocol) and give your local AI models real-time web access. In just a few steps, unlock tools like web search, crawling, Google Search, Maps, and more—no SDKs required.

When to Use JavaScript Rendering (And When You Don’t Need It)

Not sure whether a page needs JavaScript rendering to crawl correctly? This guide shows when to use `render_js=true`, when standard HTTP crawling is enough, and how to use a cost-efficient fallback strategy with Crawleo.

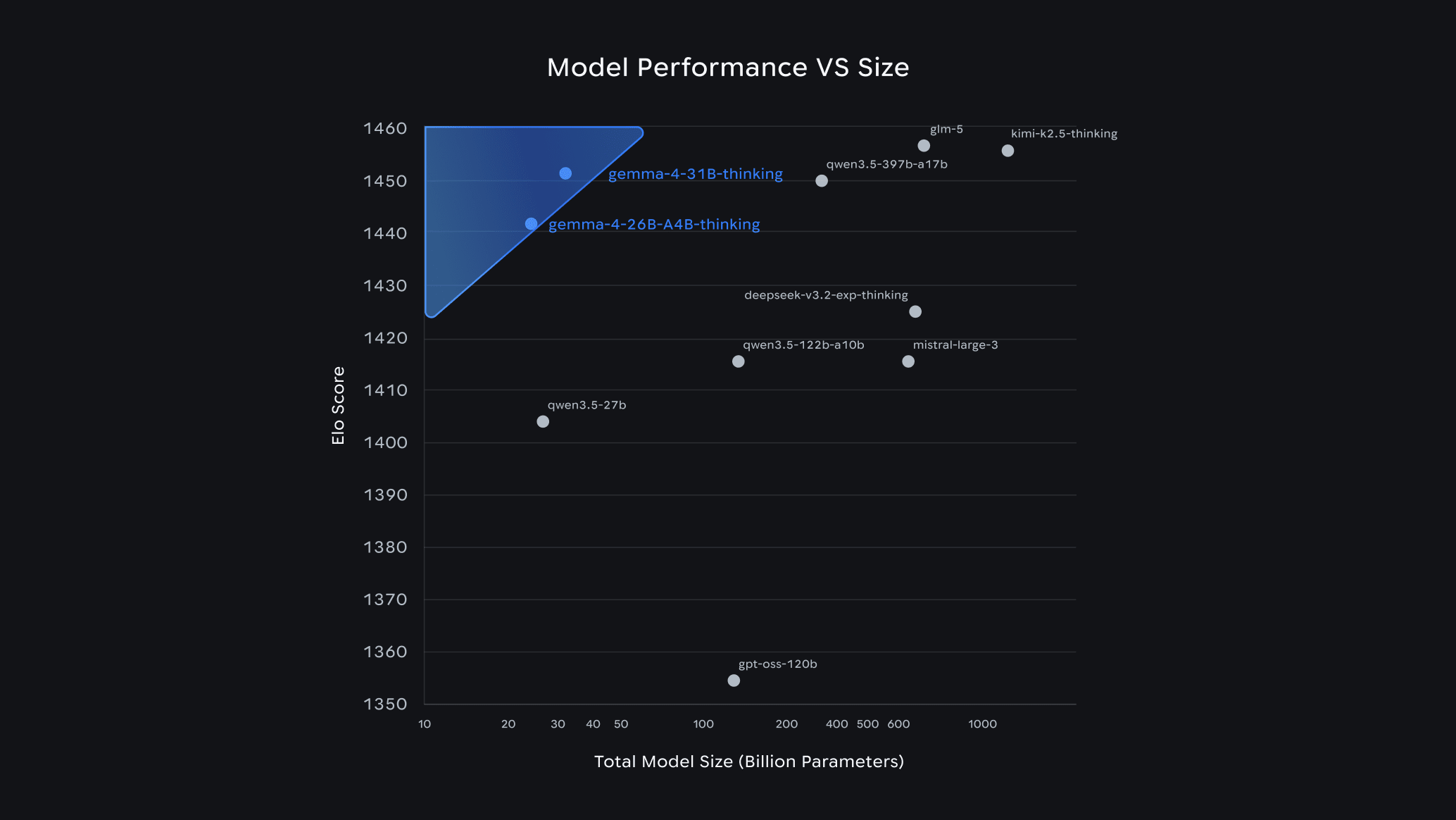

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.