Make Antigravity crawl the web without getting blocked in real-time for free

1. Open MCP Server Settings

From the Agent Panel in Antigravity IDE:

- Click Additional options (the three dots in the top‑right corner)

- Click MCP Servers

- Click Manage MCP Servers from the top right

A new tab called Manage MCPs will appear in the editor.

2. Open Raw MCP Configuration

Inside the Manage MCPs tab:

- Click View raw config

This opens the raw MCP JSON configuration file.

3. Add Crawleo MCP Configuration

Paste the following JSON into the configuration file:

{

"mcpServers": {

"crawleo": {

"serverUrl": "https://api.crawleo.dev/mcp",

"transport": "http",

"headers": {

"Authorization": "Bearer API_KEY"

}

}

}

}

Replace API_KEY with your actual Crawleo API key.

Example:

"Authorization": "Bearer ck_live_xxxxxxxxxxxxxxxxx"

Save the file after editing.

4. Refresh MCP Servers

- Go back to the Manage MCPs tab

- Click Refresh

If the configuration is valid, Antigravity IDE will register the Crawleo MCP server.

What You Get After Setup

After completing this setup, Antigravity IDE will expose two Crawleo MCP tools:

search_webfor real‑time web searchcrawl_webfor direct page crawling

Troubleshooting

Error: Unauthorized or Stream Failure

If you see the following error:

Error: streamable http connection failed: calling "initialize": sending "initialize": Unauthorized, sse fallback failed: missing endpoint: first event is "", want "endpoint".

Cause:

- An invalid or incorrect Crawleo API key was used

Fix:

- Double‑check the API key

- Ensure there are no extra spaces or missing characters

- Confirm the key is active in your Crawleo dashboard

After correcting the key, refresh MCP servers again.

Tags

Related Posts

How to Connect Crawleo to LM Studio via MCP

Learn how to connect Crawleo to LM Studio using MCP (Model Context Protocol) and give your local AI models real-time web access. In just a few steps, unlock tools like web search, crawling, Google Search, Maps, and more—no SDKs required.

When to Use JavaScript Rendering (And When You Don’t Need It)

Not sure whether a page needs JavaScript rendering to crawl correctly? This guide shows when to use `render_js=true`, when standard HTTP crawling is enough, and how to use a cost-efficient fallback strategy with Crawleo.

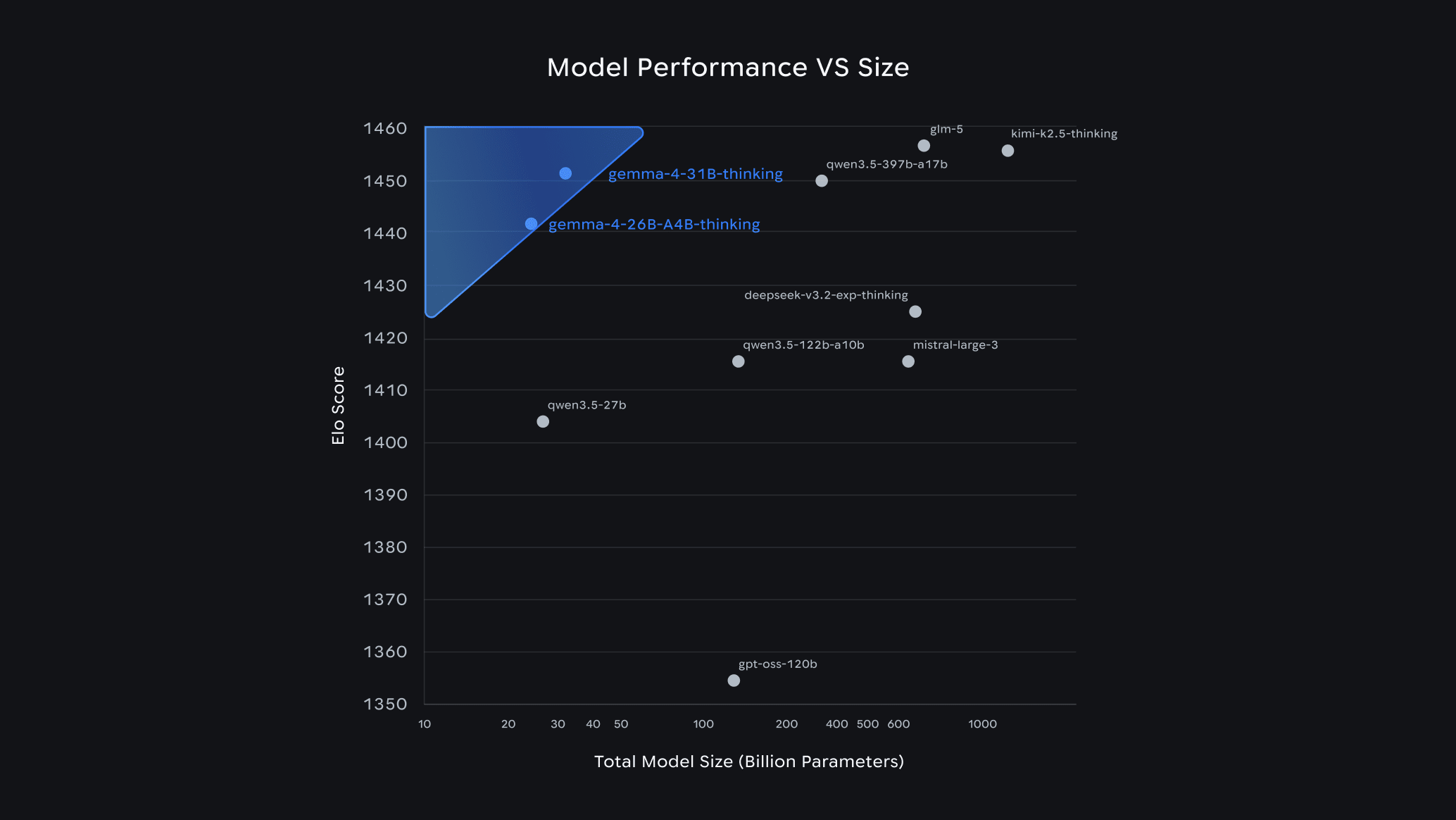

Gemma 4 Guide: How to Choose the Right Model & Run It Locally with Ollama

Google's Gemma 4 is one of the most capable open-source AI model families available today — and it runs completely free on your own hardware. In this guide, we break down each model variant, help you pick the right one for your setup, and walk you through a quick-start installation with Ollama.